Manufacturing industries face unprecedented pressure to accelerate product development cycles whilst maintaining quality standards and reducing costs. Advanced simulation technologies have emerged as transformative tools that fundamentally reshape how companies approach innovation, enabling organisations to compress traditional development timelines from years to months. These sophisticated digital platforms allow engineers and designers to test, validate, and optimise products virtually before physical prototypes exist, eliminating costly design iterations and manufacturing delays.

The convergence of computational power, artificial intelligence, and cloud computing has revolutionised simulation capabilities, making previously impossible analyses routine. Modern simulation environments can model complex physical phenomena with remarkable accuracy, from fluid dynamics in automotive engines to molecular interactions in pharmaceutical compounds. This technological evolution has created new paradigms where virtual testing often precedes physical testing, dramatically reducing the resources required for product development whilst improving final product quality.

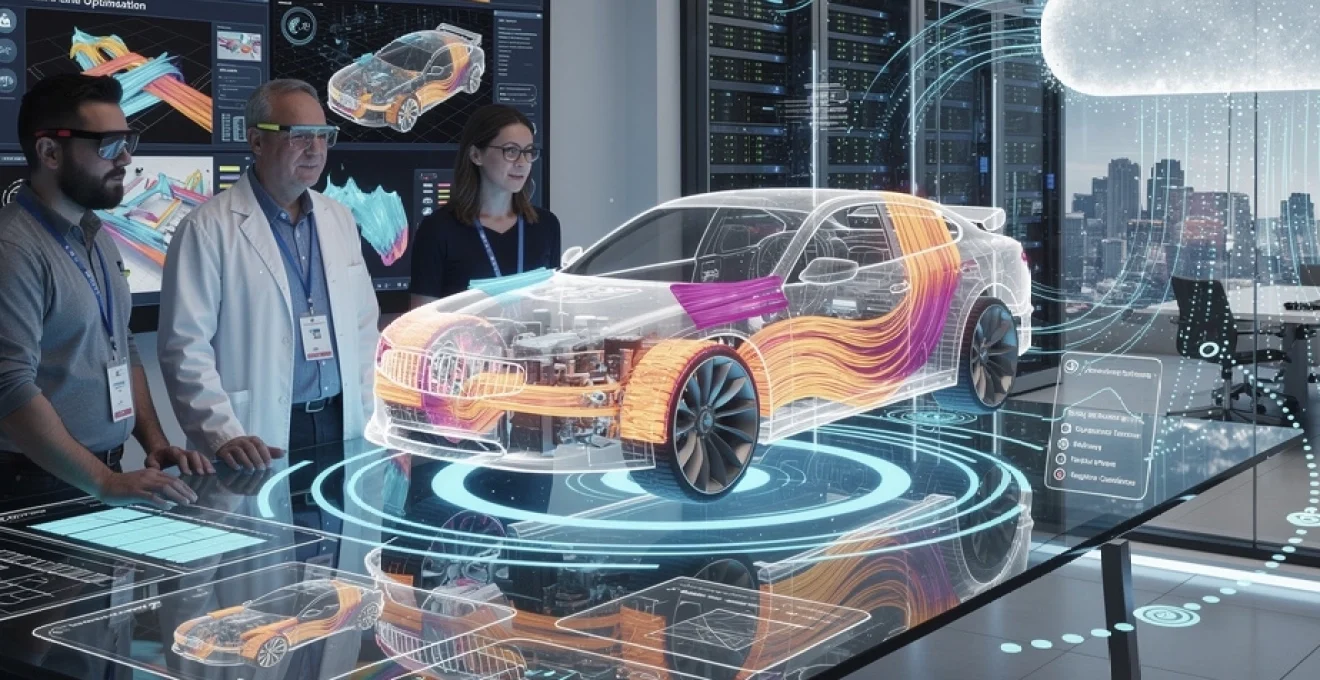

Digital twin technology integration in product development pipelines

Digital twin technology represents a paradigm shift in product development, creating virtual replicas of physical systems that evolve alongside their real-world counterparts. This technology integrates seamlessly into modern development pipelines, providing continuous feedback loops between design, manufacturing, and operational phases. Companies implementing digital twins report reduction in development time of up to 40%, whilst simultaneously improving product reliability and performance metrics.

The integration process involves connecting multiple data sources, including CAD models, sensor networks, manufacturing equipment, and operational databases. This comprehensive data ecosystem enables real-time monitoring and predictive capabilities that were previously impossible. Engineers can now observe how design changes impact manufacturing processes, product performance, and lifecycle costs before committing to expensive tooling or production modifications.

ANSYS twin builder implementation for Real-Time product validation

ANSYS Twin Builder serves as a comprehensive platform for creating physics-based digital twins that accurately represent complex systems behaviour. The platform excels in combining multiple physics domains, allowing engineers to model mechanical, electrical, thermal, and fluid systems within a single environment. This multiphysics approach enables validation of product interactions that traditional single-domain simulations cannot capture effectively.

Implementation typically involves creating reduced-order models from high-fidelity simulations, optimising computational efficiency without sacrificing accuracy. These models integrate with real-time data streams, enabling continuous validation against actual operating conditions. Manufacturing teams utilise Twin Builder to predict equipment maintenance needs, optimise production parameters, and identify potential quality issues before they impact production schedules.

Siemens NX digital twin architecture for manufacturing process optimisation

Siemens NX provides an integrated digital twin architecture that spans the entire product lifecycle, from initial concept through end-of-life decommissioning. The platform’s strength lies in its ability to maintain data continuity across design, simulation, manufacturing, and service phases. This seamless integration ensures that insights gained during operation feed back into design improvements for future iterations.

The architecture supports collaborative workflows where design teams, manufacturing engineers, and service technicians access shared digital models. Changes made in manufacturing processes automatically update design documentation, whilst field performance data influences future design decisions. This closed-loop approach accelerates innovation cycles by ensuring lessons learned are immediately incorporated into ongoing projects.

PTC ThingWorx platform integration with IoT sensor networks

PTC ThingWorx creates powerful connections between digital twins and Internet of Things sensor networks, enabling real-time data ingestion from deployed products. The platform excels in handling diverse sensor types and communication protocols, creating unified data streams from complex distributed systems. This capability proves particularly valuable for products operating in harsh environments where traditional monitoring approaches prove inadequate.

Integration with sensor networks enables predictive maintenance strategies that reduce unplanned downtime by up to 60%. Manufacturers leverage this capability to optimise maintenance schedules, predict component failures, and identify usage patterns that inform future product designs. The platform’s analytics capabilities transform raw sensor data into actionable insights that drive continuous improvement initiatives.

MATLAB simulink digital twin modelling for automotive systems

MATLAB Simulink provides sophisticated modelling capabilities specifically designed for automotive control systems and vehicle dynamics. The platform’s strength lies in its ability to model complex control algorithms alongside physical system behaviour, enabling comprehensive validation of automotive innovations. Engineers utilise Simulink to test autonomous driving algorithms, powertrain control strategies, and safety systems within realistic virtual environments.

The automotive

The automotive industry leverages these capabilities to reduce physical prototyping, accelerate homologation, and shorten validation cycles. By linking Simulink models with hardware-in-the-loop (HIL) test benches, engineers validate control software against a high-fidelity digital twin before a physical vehicle exists. This approach helps automotive manufacturers uncover edge cases, validate functional safety requirements, and iterate software releases in weeks instead of months, directly improving time-to-market for new vehicle platforms.

Dassault systèmes 3DEXPERIENCE virtual prototyping workflows

Dassault Systèmes 3DEXPERIENCE offers a unified environment where CAD, CAE, PLM, and manufacturing simulation coexist on a single data model. This tight integration enables virtual prototyping workflows that span concept design, structural validation, manufacturing feasibility, and service planning. Instead of passing files between disconnected tools, teams collaborate around a shared digital twin, reducing handoff delays and version conflicts.

Within 3DEXPERIENCE, designers can run structural, thermal, and kinematic simulations directly on parametric models, then feed validated designs into DELMIA for virtual manufacturing process planning. Virtual build events, assembly sequence simulations, and ergonomics assessments highlight potential bottlenecks and safety risks before physical lines are commissioned. Organisations that adopt these virtual prototyping workflows often report reductions of 30–50% in physical prototype builds, significantly cutting both development time and cost.

Computational fluid dynamics acceleration through advanced simulation frameworks

Computational Fluid Dynamics (CFD) has long been essential for optimising aerodynamics, thermal management, and mixing processes. However, traditional CFD workflows are computationally expensive, often requiring days of runtime for a single design iteration. Advanced simulation frameworks now combine reduced-order models, adaptive meshing, and GPU acceleration to compress CFD turnaround times from days to hours, or even minutes, without sacrificing critical accuracy.

Modern CFD platforms, such as ANSYS Fluent, Siemens Simcenter STAR-CCM+, and OpenFOAM-based frameworks, integrate automation for mesh generation, solver configuration, and post-processing. By embedding parametric studies and design-of-experiments directly into these tools, engineers can sweep hundreds of design variants overnight. For example, optimising an electric vehicle’s battery thermal management system via accelerated CFD allows teams to converge on robust cooling strategies earlier, reducing late-stage redesigns that typically delay market launch.

To further reduce time-to-market, many organisations are embedding CFD into their digital twin strategies. Real-time or near-real-time reduced CFD models can be deployed within control systems to predict temperature, pressure, or flow distribution under varying operating conditions. This fusion of CFD and digital twin technology enables predictive maintenance, energy optimisation, and continuous performance tuning long after the initial product launch.

Machine learning-enhanced predictive modelling for rapid design iteration

Machine learning (ML) provides a powerful complement to traditional simulation by learning patterns from historical data and high-fidelity analyses. When combined with advanced simulation technologies, ML models function as fast “meta-models” or surrogates that approximate complex physics with a fraction of the computational cost. This enables rapid design iteration, what-if scenario analysis, and early-stage feasibility assessments, even when full-scale simulations would be prohibitively slow.

Instead of simulating every possible design from scratch, engineers can train ML models on a curated set of simulation results and experimental data. These models then predict performance metrics—such as stress, drag coefficient, or fatigue life—almost instantly for new design candidates. The result is a hybrid workflow where high-fidelity simulations are reserved for critical verification, while ML-driven surrogates guide daily design decisions and accelerate convergence to optimal solutions.

Tensorflow integration with CAD systems for automated design optimisation

TensorFlow has become a popular framework for embedding deep learning into engineering design environments. By integrating TensorFlow models with CAD systems via APIs or custom plugins, organisations can perform automated design optimisation directly within their familiar modelling tools. Designers adjust geometry, and in the background, TensorFlow-powered models instantly estimate performance outcomes and suggest improvements.

For example, an aerospace team might train a convolutional neural network (CNN) in TensorFlow to predict aerodynamic drag based on wing geometry exported from CAD. Once deployed, the model works like an intelligent assistant: as you modify the airfoil shape or winglet configuration, it provides real-time feedback on drag, lift-to-drag ratio, and fuel efficiency. This tight CAD–TensorFlow integration reduces the number of full CFD runs needed, shifting simulation resources to late-stage validation rather than early exploratory work.

To avoid “black box” concerns, leading organisations pair these deep learning models with explainability tools and design constraints derived from physics. This ensures that auto-generated design suggestions remain physically feasible and compliant with manufacturing constraints, rather than purely optimised for numerical performance.

Pytorch neural networks for materials property prediction

PyTorch excels in research-heavy environments where rapid prototyping of neural network architectures is key, making it an ideal choice for materials property prediction. By training networks on datasets of composition, processing conditions, and microstructure images, engineers can predict properties such as yield strength, fatigue resistance, or thermal conductivity. This approach dramatically accelerates materials selection and development for new products.

Consider the development of advanced composites for aerospace or battery materials for electric vehicles. Traditional testing cycles can span months, involving repeated synthesis, processing, and characterisation. PyTorch-based models can learn how subtle changes in formulation or processing parameters affect final properties, enabling you to explore thousands of virtual material candidates before committing to lab work. As a result, promising candidates reach the test bench faster, and unpromising directions are filtered out early, shortening the overall innovation cycle.

When combined with high-fidelity simulation data—such as micro-scale finite element models of microstructure—PyTorch networks become powerful surrogates that bridge multiple scales, from atoms to components. This multiscale capability is particularly valuable in sectors like automotive and aerospace, where material performance under complex loading conditions directly affects safety and regulatory approval timelines.

Scikit-learn algorithms in failure mode analysis and prevention

Scikit-learn provides a robust suite of classical machine learning algorithms—such as random forests, support vector machines, and gradient boosting—that are well-suited to failure mode analysis. By training models on historical test results, warranty claims, and simulation outputs, organisations can identify which combinations of design variables and operating conditions are most likely to cause failure. This data-driven approach complements conventional Failure Mode and Effects Analysis (FMEA) with quantitative risk estimates.

For instance, an industrial equipment manufacturer might use scikit-learn to analyse a dataset combining load cycles, temperature profiles, and geometric parameters for a critical component. The model can highlight which parameter ranges correlate strongly with fatigue cracks or early wear, revealing hidden interactions that traditional analyses might miss. Armed with these insights, engineering teams refine design margins, adjust process parameters, or introduce design safeguards to prevent failures before they occur.

Integrating scikit-learn workflows into the product development process also streamlines compliance documentation. When regulators or customers ask why a specific safety factor was chosen, you can point to statistically grounded models and ensemble-based risk estimates, rather than relying solely on expert judgment.

Reinforcement learning applications in topology optimisation processes

Reinforcement learning (RL) brings a new level of autonomy to topology optimisation by allowing algorithms to “learn” how to modify geometry to maximise performance objectives. Unlike traditional gradient-based methods that follow a predetermined optimisation path, RL agents explore the design space, receiving rewards for improvements in metrics such as stiffness-to-weight ratio, natural frequency, or thermal performance. Over time, the agent discovers non-intuitive structural layouts that can outperform human-designed concepts.

In practice, RL-driven topology optimisation involves linking an RL framework—often built on TensorFlow or PyTorch—with a finite element solver. At each iteration, the agent proposes changes to the material distribution or geometric features. The solver evaluates the resulting structure and returns a performance score, which the agent uses to refine its strategy. This iterative loop continues until the design converges to an optimal or near-optimal configuration.

By automating large portions of the search process, RL reduces the number of manual design iterations and helps uncover innovative lightweight structures, particularly valuable in aerospace, automotive, and consumer electronics. The result is not just faster time-to-market, but also products that are more competitive in terms of performance and sustainability.

Cloud-based high performance computing for distributed simulation workloads

As simulation models grow more detailed and multidomain, on-premises hardware often becomes a bottleneck. Cloud-based High Performance Computing (HPC) platforms offer elastic resources that scale on demand, enabling organisations to run thousands of simulation cases in parallel. This distributed approach transforms simulation from a scarce resource into an accessible service, helping teams compress weeks of computation into hours.

Cloud providers now offer specialised instances optimised for finite element analysis, CFD, and machine learning, along with managed services for job scheduling, storage, and data visualisation. By shifting simulation workloads to the cloud, you can align computing spend with project needs, spinning up clusters during peak development phases and scaling down between major milestones. This flexibility is particularly valuable when you are racing competitors to launch innovations in crowded markets.

AWS EC2 elastic computing for large-scale finite element analysis

Amazon Web Services (AWS) EC2 provides a wide range of instance types tailored for compute- and memory-intensive workloads, making it ideal for large-scale finite element analysis (FEA). Engineering teams can deploy clusters of EC2 instances running popular solvers such as Abaqus, ANSYS Mechanical, or NASTRAN, orchestrated via AWS ParallelCluster or third-party schedulers. This elastic computing model allows you to scale from a handful of cores to thousands as project demands grow.

For example, during a crashworthiness study of a new vehicle platform, dozens of impact scenarios must be evaluated under varying conditions and configurations. Running these simulations sequentially on local hardware could take months. By distributing the workload across an EC2-based cluster, all scenarios run in parallel, with results available in days. This acceleration not only shortens time-to-market but also supports more exhaustive exploration of safety-critical edge cases.

Security and compliance considerations are addressed through Virtual Private Clouds (VPCs), encryption, and fine-grained access controls. Many organisations also use AWS cost-monitoring tools to track simulation spend and allocate costs to specific programmes or business units, ensuring financial transparency.

Microsoft azure HPC clusters in pharmaceutical drug discovery simulations

Microsoft Azure offers purpose-built HPC capabilities that are particularly attractive to pharmaceutical and biotech companies conducting complex drug discovery simulations. Molecular dynamics, protein–ligand docking, and quantum chemistry calculations are computationally demanding, yet they are central to reducing the need for costly wet-lab experiments. Azure’s HPC clusters, equipped with high-speed interconnects and GPU-enabled nodes, provide the performance needed to run these simulations at scale.

Drug discovery teams can orchestrate thousands of docking runs or long-timescale molecular dynamics simulations in parallel, exploring vast chemical spaces in a fraction of the traditional time. By integrating Azure Batch or Azure CycleCloud with existing workflows, organisations automate job submission, monitoring, and data aggregation. This automation converts what used to be a serial, labour-intensive process into a high-throughput virtual screening pipeline.

The impact on time-to-market is significant: potential candidates with low predicted efficacy or high toxicity risks are filtered out early, focusing experimental resources on the most promising molecules. In a sector where each month of delay can translate into millions of lost revenue, such simulation-driven acceleration is a strategic advantage.

Google cloud platform GPU acceleration for neural network training

Google Cloud Platform (GCP) provides powerful GPU and TPU instances optimised for training and deploying neural networks at scale. For organisations combining simulation with machine learning—such as training surrogate models on FEA or CFD results—GCP’s GPU acceleration dramatically shortens model training cycles. Faster training means you can iterate on network architectures and hyperparameters more often, achieving higher accuracy in less time.

For example, a manufacturer might use GCP to train a deep neural network that predicts structural response under various loading conditions, based on a training set generated from thousands of FEA runs. Instead of waiting days for each training iteration on local hardware, GPU-accelerated training completes in hours. This rapid feedback loop enables data scientists and engineers to refine the model and deploy it into design workflows sooner, unlocking real-time predictive insights during concept development.

Once trained, these models can be hosted on GCP’s AI Platform or Vertex AI, serving predictions to applications across the product lifecycle—from design optimisation dashboards to predictive maintenance portals for deployed assets.

IBM cloud quantum computing integration with classical simulation methods

IBM Cloud is pioneering the integration of quantum computing with classical simulation, opening up new possibilities for solving complex optimisation and materials science problems. While quantum hardware is still emerging, hybrid approaches—where quantum algorithms tackle specific subproblems within a larger classical workflow—are already showing promise. For example, quantum-inspired algorithms can accelerate combinatorial optimisation tasks that are common in logistics, materials design, and structural layout.

In a typical hybrid setup, classical simulations handle bulk physics calculations, while quantum or quantum-inspired solvers focus on high-dimensional optimisation steps. IBM’s Qiskit framework, accessible via IBM Cloud, allows researchers to experiment with quantum circuits that search for optimal configurations or energy minima more efficiently than traditional methods. As these capabilities mature, we can expect significant reductions in the time needed to find globally optimal designs in complex design spaces.

Although still at an early stage for mainstream engineering, forward-looking organisations are beginning to prototype these hybrid workflows. Doing so positions them to capitalise quickly as quantum hardware scales, turning what is today an experimental capability into a future competitive differentiator for time-to-market.

Industry-specific simulation case studies and time-to-market metrics

Across industries, advanced simulation technologies are delivering measurable reductions in time-to-market while improving product performance and reliability. In automotive, virtual homologation and digital crash testing have cut physical prototype builds by up to 50%, enabling manufacturers to bring new vehicle platforms to market 6–12 months faster. In aerospace, multi-physics digital twins of engines and airframes support predictive maintenance and extended life cycles, contributing to reduced certification timelines and fewer in-service issues.

Consumer electronics companies use thermal and structural simulation to compress development of smartphones, wearables, and IoT devices into rapid annual cycles. By simulating drop tests, thermal hotspots, and antenna performance early, they minimise late-stage design changes that could derail product launches. Similarly, in process industries such as chemicals and oil & gas, plant-wide dynamic simulations support safer, faster commissioning of new facilities, allowing operators to rehearse transient events and optimise control strategies in a virtual environment before first production.

To quantify the impact of these approaches, leading organisations track time-to-market metrics alongside simulation maturity indicators. Common metrics include the number of design iterations completed per month, simulation hours per project, ratio of virtual to physical tests, and the percentage of issues detected in simulation rather than in late-stage testing. When you combine these metrics with business KPIs—such as launch dates met, warranty claims, or regulatory findings—you can directly correlate simulation investment with commercial outcomes.

Regulatory compliance acceleration through virtual testing environments

Regulatory compliance is often perceived as a drag on innovation speed, but advanced simulation can transform it into a more streamlined, predictable process. Virtual testing environments allow organisations to demonstrate compliance with safety, emissions, and performance standards using digital evidence, reducing reliance on expensive and time-consuming physical tests. Regulators in automotive, aerospace, and medical devices are increasingly open to simulation-based evidence, provided that models are validated and accompanied by robust traceability.

For example, virtual crash tests and pedestrian impact simulations now play a central role in satisfying automotive safety regulations, significantly reducing the number of full-vehicle crash tests required. In medical devices, finite element models of implants and cardiovascular devices support submissions to regulatory bodies by demonstrating structural integrity, fatigue life, and interaction with biological tissues. These virtual studies not only accelerate approval but also help identify worst-case scenarios that might be impractical or unethical to reproduce experimentally.

To harness these benefits, organisations must invest in model validation, uncertainty quantification, and documentation practices that stand up to regulatory scrutiny. That means maintaining an auditable digital thread connecting requirements, models, input data, and results. When regulators can trace every step—from initial assumptions to final conclusions—trust in virtual evidence increases. Over time, as more approvals incorporate simulation results, virtual testing will become a primary pathway for compliance, further shortening the time-to-market for complex, safety-critical innovations.