Industrial operations are experiencing a fundamental transformation as predictive analytics revolutionises how manufacturers approach maintenance, production planning, and operational efficiency. The convergence of machine learning algorithms, IoT sensor networks, and advanced data processing capabilities has created unprecedented opportunities for industrial enterprises to optimise their operations proactively rather than reactively. This shift from traditional scheduled maintenance to intelligent, data-driven decision-making represents one of the most significant advances in industrial automation since the introduction of programmable logic controllers.

The industrial sector generates vast amounts of data from equipment sensors, production systems, and operational processes, yet historically, much of this valuable information remained untapped. Modern predictive analytics platforms now harness this data deluge, transforming raw sensor readings into actionable insights that drive operational excellence. Manufacturing facilities implementing comprehensive predictive analytics solutions report downtime reductions of 30-50% and maintenance cost savings of 10-25%, whilst simultaneously improving product quality and energy efficiency.

Machine learning algorithms transforming industrial maintenance strategies

The application of sophisticated machine learning algorithms in industrial maintenance represents a paradigm shift from reactive to predictive operational strategies. These algorithms analyse vast datasets from multiple sources, identifying subtle patterns and correlations that human operators might miss. The transformation extends beyond simple fault detection to encompass comprehensive equipment health monitoring, performance optimisation, and strategic maintenance planning that aligns with production schedules and business objectives.

Machine learning models excel at processing complex, multi-dimensional data streams from industrial equipment, learning from historical patterns whilst adapting to new operational conditions. Advanced algorithms can simultaneously monitor hundreds of parameters, detecting anomalies that indicate potential failures weeks or months before they occur. This capability enables maintenance teams to plan interventions during scheduled downtime, optimising both equipment availability and maintenance resource allocation.

Random forest models for equipment failure pattern recognition

Random forest algorithms have emerged as particularly effective tools for identifying equipment failure patterns in complex industrial environments. These ensemble learning methods combine multiple decision trees to create robust predictive models that excel at handling the non-linear relationships common in industrial data. The algorithm’s ability to rank feature importance helps maintenance engineers understand which operational parameters most significantly influence equipment health, enabling targeted monitoring and intervention strategies.

Industrial applications of random forest models demonstrate remarkable accuracy in predicting bearing failures, motor malfunctions, and hydraulic system degradation. The models analyse hundreds of variables including vibration signatures, temperature profiles, pressure variations, and operational loads to identify the unique fingerprints associated with different failure modes. This granular understanding enables maintenance teams to implement condition-specific maintenance protocols that address root causes rather than symptoms.

Neural network applications in vibration analysis and anomaly detection

Deep neural networks have revolutionised vibration analysis capabilities in industrial settings, enabling the detection of subtle anomalies that traditional frequency-domain analysis might overlook. Convolutional neural networks (CNNs) process vibration spectrograms to identify characteristic patterns associated with specific fault conditions, whilst recurrent neural networks capture temporal dependencies in vibration signals that indicate progressive wear or developing problems.

The implementation of neural networks for anomaly detection extends beyond simple threshold monitoring to encompass sophisticated pattern recognition that adapts to changing operational conditions. These systems learn normal operational signatures for each piece of equipment, automatically adjusting baselines as conditions evolve. When anomalies occur, the networks provide detailed diagnostic information, pinpointing the likely location and nature of developing problems with unprecedented accuracy.

Support vector machines for quality control process optimisation

Support Vector Machines (SVMs) excel in quality control applications where clear boundaries between acceptable and unacceptable outcomes must be established. These algorithms create optimal decision boundaries in high-dimensional feature spaces, enabling precise classification of product quality based on multiple process parameters. The mathematical elegance of SVMs makes them particularly suitable for applications where interpretability and consistency are paramount.

Industrial quality control systems utilising SVM algorithms can simultaneously monitor dozens of process variables, identifying the subtle parameter combinations that lead to quality defects. The algorithms excel at handling imbalanced datasets common in quality control, where defective products represent a small fraction of total production. This capability enables early detection of quality drift, allowing process engineers to make adjustments before defective products are produced.

Time series forecasting with LSTM networks for production planning

Long Short-Term Memory (LSTM) networks have transformed production

Long Short-Term Memory (LSTM) networks have transformed production planning by enabling highly accurate time series forecasting across complex, multi-stage manufacturing environments. Unlike traditional statistical methods, LSTMs capture long-range temporal dependencies in production, demand, and supply data, learning how seasonal patterns, shift changes, maintenance events, and external market conditions interact over time. This makes them particularly powerful for forecasting machine utilisation, work-in-progress levels, and order backlogs in dynamic industrial operations.

In practical deployments, LSTM-based forecasting models ingest historical production output, order intake, lead times, and even external signals such as commodity prices or weather data. The models then generate rolling forecasts that update as new data arrives, allowing planners to adjust schedules, labour allocations, and material purchases in near real time. Manufacturers adopting LSTM-based planning often report improved schedule adherence, reduced overtime costs, and lower inventory buffers, as production plans become more closely aligned with actual demand patterns.

Iot sensor integration and real-time data processing architectures

Effective predictive analytics in industrial operations depends on a robust architecture for IoT sensor integration and real-time data processing. Without reliable, high-quality data from machines and production systems, even the most advanced algorithms will underperform. Modern industrial analytics platforms therefore combine edge devices, streaming pipelines, time series databases, and secure communication protocols to create an end-to-end data fabric that spans from the shop floor to the cloud.

This architecture must support diverse sensor types, legacy controllers, and modern smart devices, all operating with different sampling rates and data formats. At the same time, industrial environments impose strict requirements around latency, determinism, and cybersecurity. The most successful implementations balance local processing at the edge with centralised analytics capabilities, ensuring that critical decisions can be made within milliseconds when necessary, while more computationally intensive modelling occurs in the cloud or data centre.

Edge computing deployment with intel OpenVINO for latency reduction

Edge computing has become a cornerstone of industrial predictive analytics, particularly for use cases where milliseconds matter, such as high-speed assembly lines or continuous process control. Deploying models with Intel OpenVINO on edge gateways or industrial PCs allows manufacturers to run optimised inference close to the machines, dramatically reducing latency and network dependency. Instead of streaming raw high-frequency sensor data to the cloud, pre-processed features and events are generated locally and transmitted upstream as needed.

OpenVINO enables the conversion and optimisation of models built in popular frameworks into efficient binaries that run on CPUs, integrated GPUs, and dedicated accelerators at the edge. This allows vibration anomaly detection, vision-based quality inspection, and thermal monitoring models to operate directly on camera feeds or sensor streams, even in bandwidth-constrained environments. For you as an operations leader, this means more responsive safety systems, faster fault detection, and less risk of network outages disrupting critical monitoring functions.

Apache kafka streaming pipelines for industrial data ingestion

Apache Kafka has become the de facto backbone for streaming data ingestion in large-scale industrial analytics deployments. Its distributed, fault-tolerant architecture supports the continuous flow of telemetry from thousands of sensors, PLCs, and manufacturing execution systems, while preserving ordering guarantees and enabling horizontal scalability. By treating data streams as durable logs, Kafka allows multiple consumer applications to process the same real-time data for different purposes without duplicating integration work.

In a typical setup, edge gateways or protocol converters publish machine data into Kafka topics, where separate consumers handle anomaly detection, dashboard visualisation, and long-term storage. This decoupling reduces the complexity of adding new analytics services and prevents point-to-point integrations from becoming a bottleneck as use cases expand. When you want to pilot a new predictive maintenance model or energy optimisation algorithm, you can simply subscribe to the relevant topics rather than re-engineering your entire data pipeline.

Influxdb time series database management for sensor data storage

Time series databases such as InfluxDB are specifically designed to store and query high-volume sensor data efficiently, making them ideal for industrial predictive analytics platforms. InfluxDB’s schema-less design, compression techniques, and downsampling capabilities allow manufacturers to retain years of historical machine data without incurring prohibitive storage costs. This historical context is essential for training robust predictive models and understanding how equipment performance evolves over long periods.

Operations teams can use InfluxDB to maintain multiple resolutions of the same data, keeping second-level granularity for recent weeks while aggregating older data into minute or hourly summaries. This multi-resolution storage strategy supports both real-time monitoring dashboards and long-horizon trend analyses. When combined with visualisation tools, InfluxDB enables engineers to overlay predicted equipment health metrics with historical failure events, making it easier to validate models and build trust in predictive analytics outputs.

MQTT protocol implementation for secure device communication

MQTT has emerged as a lightweight, reliable messaging protocol for connecting industrial devices to analytics platforms, particularly in environments with constrained bandwidth or intermittent connectivity. Its publish–subscribe architecture allows sensors and controllers to send data to central brokers without needing to know which applications will consume it, simplifying device configuration and lifecycle management. For remote sites or mobile assets, MQTT’s small packet overhead and quality-of-service levels help ensure that critical telemetry reaches its destination.

From a security perspective, MQTT implementations in industrial settings increasingly rely on TLS encryption, client certificates, and fine-grained access control to protect machine data and prevent unauthorised device access. When combined with edge gateways, MQTT brokers can act as secure aggregation points, isolating local control networks from external systems. This approach lets you scale predictive analytics across multiple plants or regions while maintaining a robust security posture that aligns with OT and IT governance requirements.

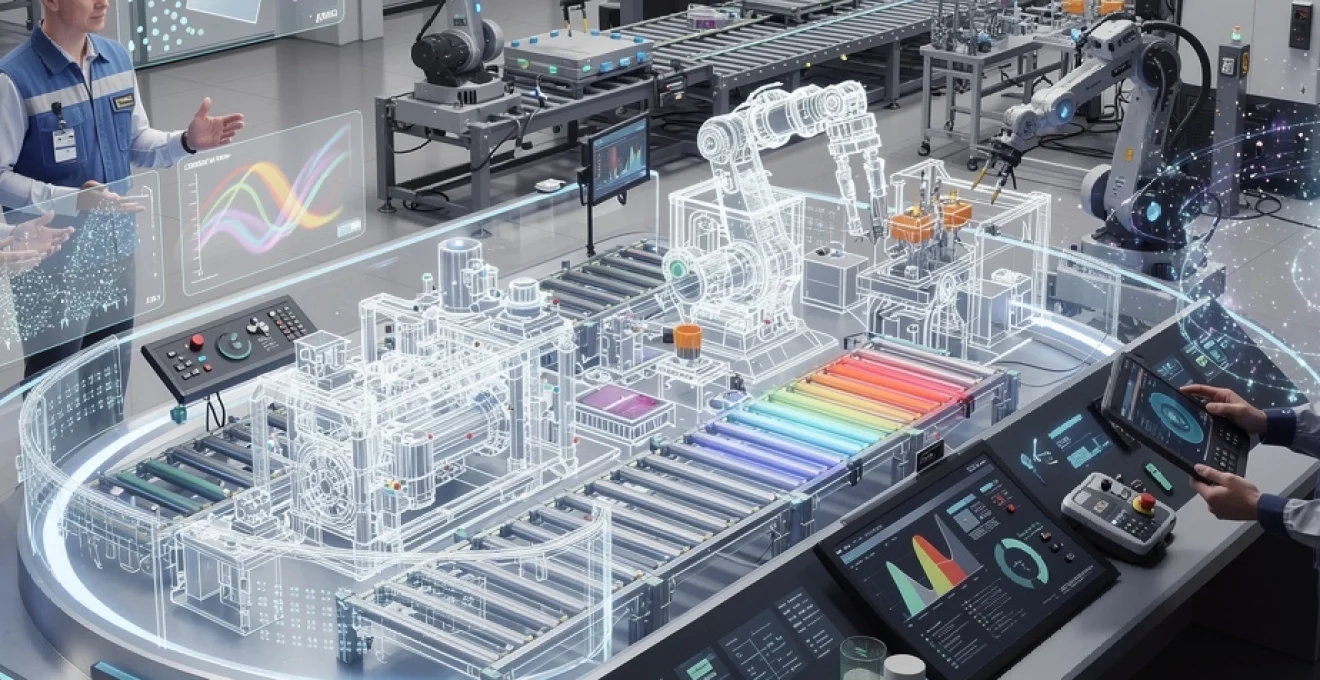

Digital twin technology and simulation-driven decision making

Digital twin technology extends predictive analytics by creating virtual replicas of physical assets, production lines, or entire facilities that evolve in synchrony with their real-world counterparts. These twins integrate live sensor data, engineering models, and historical performance information to provide a holistic view of system behaviour under varying conditions. Instead of asking “What happened?” or even “What will happen?”, you can start asking “What if we change this parameter, schedule, or configuration?” and see the impact in a safe virtual environment.

Simulation-driven decision making using digital twins enables manufacturers to test alternative maintenance strategies, production sequences, and configuration changes without disrupting ongoing operations. For example, a digital twin of a bottling line can simulate the impact of speed increases on defect rates and equipment wear, helping you identify the sweet spot between throughput and reliability. As more data flows into the twin, its predictive accuracy improves, transforming it from a static engineering model into a living decision-support tool that underpins continuous improvement initiatives.

Condition-based maintenance frameworks using predictive models

Condition-based maintenance (CBM) frameworks represent the practical expression of predictive analytics in day-to-day industrial operations. Rather than following fixed time-based schedules, CBM programmes trigger maintenance interventions based on actual equipment condition indicators derived from sensor data and predictive models. This shift reduces unnecessary component replacements, avoids catastrophic failures, and aligns maintenance tasks with production requirements.

Implementing a robust CBM framework involves more than deploying algorithms; it requires well-defined processes, clear governance, and integration with existing maintenance and planning systems. Maintenance teams need actionable insights, not raw scores or probabilities, along with guidance on recommended actions and associated risks. The most mature organisations combine leading indicators from predictive models with traditional lagging metrics, such as mean time between failures, to build a comprehensive picture of asset health.

Remaining useful life (RUL) estimation through degradation modelling

Remaining Useful Life (RUL) estimation is one of the most valuable applications of predictive analytics for maintenance planning. Degradation models track how key condition indicators evolve over time and project when they will cross critical thresholds associated with functional failure. By translating complex sensor data into an intuitive “time to failure” metric, RUL estimation helps planners schedule interventions at the optimal moment, balancing risk and availability.

Advanced RUL models often combine physics-of-failure knowledge with data-driven techniques, capturing both known degradation mechanisms and patterns discovered from historical failures. For example, a model for rotating equipment may combine bearing fatigue equations with machine learning features derived from vibration spectra and operating loads. When integrated into your maintenance workflows, RUL outputs can drive spare parts procurement, workforce scheduling, and even contractual discussions with equipment suppliers around service-level agreements.

Fault detection and diagnosis systems with siemens MindSphere

Industrial IoT platforms such as Siemens MindSphere provide a ready-made ecosystem for deploying fault detection and diagnosis solutions at scale. MindSphere connects to a wide range of Siemens and third-party equipment, collecting operational data into a unified cloud environment where analytics applications can be developed and managed. Pre-built connectors and templates help accelerate common use cases, such as monitoring drive systems, compressors, or packaging lines.

Within MindSphere, fault detection models can run continuously on live data streams, comparing current behaviour against learned baselines or known fault signatures. When anomalies are detected, diagnostic modules analyse contributing factors and present maintenance teams with probable root causes and recommended actions. Because the platform centralises data from multiple assets and plants, you can identify systemic issues, benchmark performance across facilities, and rapidly scale successful predictive maintenance applications throughout your industrial network.

Predictive maintenance ROI calculations and performance metrics

Demonstrating the return on investment (ROI) of predictive maintenance is essential for sustaining executive support and securing ongoing funding. A clear financial case typically combines direct savings from reduced unplanned downtime and maintenance costs with indirect benefits such as improved safety, higher product quality, and extended asset lifetimes. Analysts often begin with a simple equation that multiplies the cost of downtime per hour by the hours of downtime avoided, then layer in savings from optimised spare parts inventory and labour.

To manage predictive maintenance programmes effectively, organisations track a set of performance metrics, including prediction accuracy, lead time to failure, false positive and false negative rates, and the proportion of maintenance activities that are condition-based versus reactive. Over time, you should see unplanned interventions decline, while planned, model-driven activities increase. Establishing a baseline before implementation and conducting periodic reviews helps you quantify progress and identify areas where models, data quality, or processes require refinement.

Integration with SAP plant maintenance and oracle EAM systems

Integrating predictive analytics with enterprise asset management (EAM) systems such as SAP Plant Maintenance and Oracle EAM is critical to turning insights into action. Without this integration, maintenance teams are forced to manage model outputs in parallel tools or spreadsheets, increasing the risk that important warnings are overlooked. Tight coupling between analytics platforms and EAM systems allows predictive events to automatically generate notifications, work requests, or even fully populated work orders.

In a typical integration pattern, predictive models running on an IoT or analytics platform push health scores or RUL estimates into SAP or Oracle via APIs or middleware. Business rules within the EAM system then determine how to prioritise these alerts, assign them to technicians, and link them to relevant equipment records. This closed-loop approach ensures that your predictive maintenance strategy is embedded in existing workflows, making it easier for technicians, planners, and managers to adopt without completely changing how they work.

Energy consumption optimisation through advanced analytics

Energy consumption represents a significant portion of operating costs in many industrial environments, making it a prime candidate for optimisation through predictive analytics. By combining real-time meter readings, process parameters, and production schedules, analytics models can identify when and where energy is being wasted, and recommend adjustments that reduce consumption without compromising output. In energy-intensive sectors such as metals, chemicals, or cement, even single-digit percentage savings can translate into substantial financial and environmental benefits.

Advanced techniques, including load disaggregation, regression modelling, and reinforcement learning, allow you to understand the relationship between energy usage and key operational variables. For example, models can highlight which combinations of machine speed, batch size, and ambient conditions yield the lowest energy per unit produced. Over time, these insights inform revised standard operating procedures, setpoints, and scheduling decisions, helping your organisation move towards more sustainable, low-carbon industrial operations.

Supply chain risk assessment and demand forecasting models

Predictive analytics extends beyond the factory walls to address supply chain risk and demand variability, both of which have become critical concerns in recent years. By analysing supplier performance data, lead time variability, logistics disruptions, and macroeconomic indicators, risk assessment models can flag vulnerabilities before they result in production stoppages. You can then take proactive measures, such as diversifying suppliers, adjusting safety stocks, or renegotiating contracts, rather than reacting after a disruption occurs.

On the demand side, advanced forecasting models integrate historical sales, promotional calendars, market signals, and external factors like weather or regulatory changes to predict future order patterns with greater accuracy. Machine learning approaches, including gradient boosting and deep learning, can capture non-linear relationships and evolving customer behaviours that traditional methods struggle to model. When integrated with production planning and inventory management, these demand forecasts help synchronise industrial operations with market realities, reducing both stockouts and excess inventory while improving on-time delivery performance.