# Why cloud computing is becoming essential for scalable industrial operations

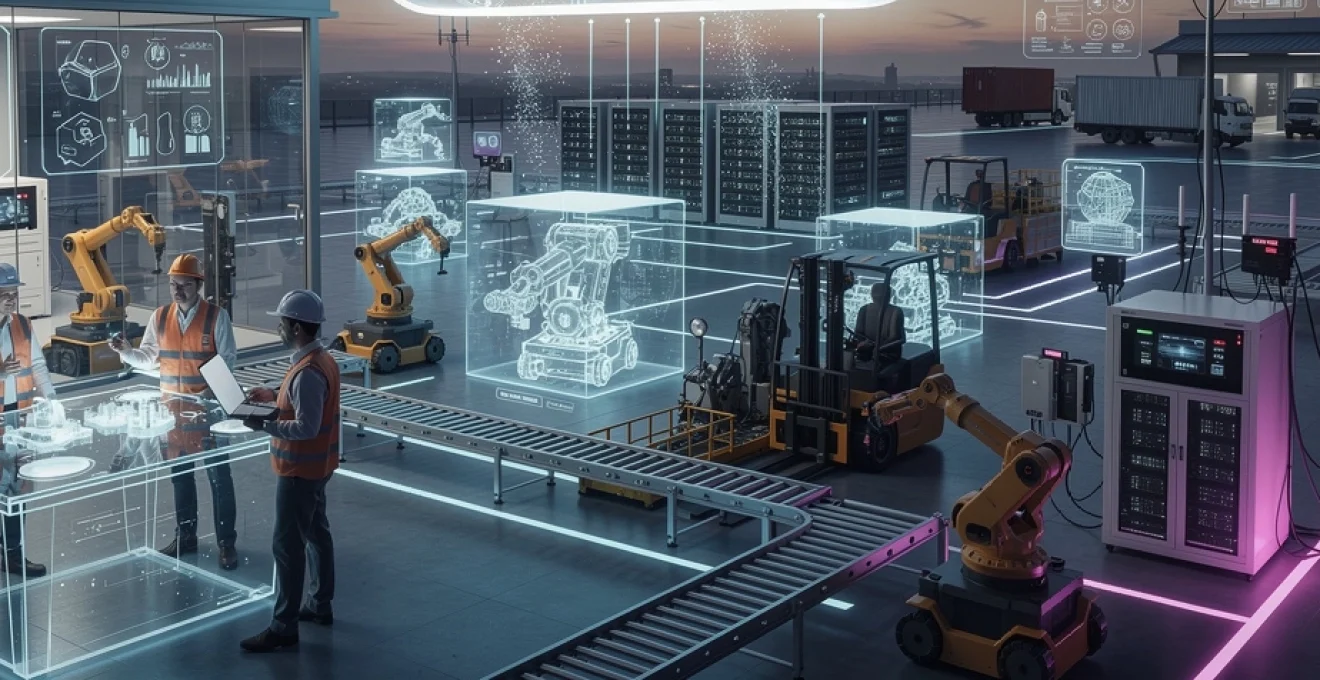

The industrial sector stands at the precipice of a technological revolution that is fundamentally reshaping how manufacturers, logistics companies, and production facilities operate. Cloud computing has emerged as the cornerstone technology enabling this transformation, providing the computational flexibility and scalability that modern industrial operations demand. As you navigate the complexities of digital transformation, understanding the technical architecture and strategic advantages of cloud infrastructure becomes not just beneficial but essential for maintaining competitive advantage in an increasingly data-driven marketplace.

Industrial operations today generate unprecedented volumes of data from sensors, machinery, supply chain systems, and quality control processes. Traditional on-premises infrastructure simply cannot scale at the pace required to process, analyze, and derive actionable insights from this information deluge. Cloud platforms offer the elasticity, processing power, and specialized services that transform raw industrial data into operational intelligence, enabling predictive maintenance, real-time optimization, and supply chain visibility that were previously impossible to achieve.

Infrastructure elasticity through Multi-Tenant cloud architectures

Multi-tenant cloud architectures represent a fundamental shift in how computational resources are provisioned and consumed within industrial environments. Rather than dedicating physical servers to specific workloads, cloud providers utilize sophisticated virtualization technologies that allow multiple customers to share underlying hardware while maintaining strict isolation and security boundaries. This architectural approach enables industrial organizations to access enterprise-grade infrastructure without the capital expenditure traditionally required for such capabilities.

The elasticity provided by multi-tenant architectures means that you can rapidly scale resources in response to fluctuating demand patterns common in manufacturing environments. During peak production periods, seasonal demand spikes, or when launching new product lines, additional compute capacity can be provisioned within minutes rather than the weeks or months required for traditional infrastructure procurement. This responsiveness fundamentally changes how industrial operations approach capacity planning, shifting from a model of over-provisioning to accommodate potential peaks to one of dynamic resource allocation aligned with actual demand.

Security concerns have historically been cited as barriers to cloud adoption in industrial settings, yet modern multi-tenant architectures incorporate security measures that often exceed what individual organizations can implement on-premises. Cloud providers employ dedicated security teams, implement continuous vulnerability scanning, maintain compliance certifications across multiple regulatory frameworks, and provide granular access controls that enable organizations to implement zero-trust security models. These capabilities ensure that sensitive industrial data and intellectual property remain protected while benefiting from the scalability advantages of shared infrastructure.

Horizontal scaling with kubernetes orchestration for manufacturing workloads

Kubernetes has emerged as the de facto standard for container orchestration, providing industrial organizations with powerful mechanisms for managing complex application deployments across distributed infrastructure. In manufacturing contexts, Kubernetes enables horizontal scaling whereby additional container instances are automatically deployed across a cluster of machines as workload demands increase. This approach proves particularly valuable for applications processing shop floor data, quality management systems, and production planning tools that experience variable load patterns throughout production cycles.

The architecture of Kubernetes provides self-healing capabilities that significantly enhance the reliability of industrial applications. When a container fails or becomes unresponsive, Kubernetes automatically restarts it or redeploys it to a healthy node within the cluster. This automated recovery mechanism ensures that critical manufacturing systems maintain high availability without requiring manual intervention from IT teams, reducing downtime and improving overall operational efficiency.

Auto-scaling mechanisms in AWS EC2 and azure virtual machine scale sets

Amazon Web Services EC2 Auto Scaling and Azure Virtual Machine Scale Sets provide infrastructure-level elasticity that complements container-based orchestration strategies. These services monitor application performance metrics and automatically adjust the number of virtual machine instances running your workloads based on predefined policies. For industrial applications with predictable patterns—such as end-of-shift reporting, batch processing of quality data, or periodic supply chain analytics—you can configure scheduled scaling that aligns compute capacity with anticipated demand.

Target tracking scaling policies enable you to define desired performance characteristics, such as maintaining average CPU utilization at 70% or ensuring that application response times remain below specific thresholds. The auto-scaling service then continuously adjusts capacity to maintain these targets, optimizing both performance and cost. This approach proves particularly effective for industrial analytics workloads where processing requirements fluctuate based on production volumes, shift schedules, and operational complexity.

Serverless computing models for Event-Driven industrial IoT processing

<p

Serverless computing services such as AWS Lambda, Azure Functions, and Google Cloud Functions allow you to run small, event-driven workloads without managing servers. In industrial IoT environments, serverless functions can be triggered by sensor events, message queue updates, or changes in time-series data, making them ideal for processing bursts of telemetry from machinery, energy meters, or production lines. You only pay for the execution time consumed, which can dramatically reduce costs for spiky workloads like exception handling, alert generation, or on-demand report creation.

From a scalability perspective, serverless platforms automatically handle concurrency and horizontal scaling behind the scenes. When thousands of IoT devices suddenly send status updates—during startup of a production shift, for example—the cloud provider spins up as many function instances as needed to keep processing latency low. This elasticity ensures that real-time industrial IoT processing remains reliable even as device fleets grow, without requiring you to constantly tune infrastructure.

Serverless also accelerates time-to-market for industrial applications. OT and IT teams can focus on writing business logic for condition monitoring, anomaly scoring, or workflow routing instead of provisioning VMs or configuring clusters. Combined with managed services such as managed message brokers and time-series databases, serverless computing becomes the glue that connects disparate industrial systems into a responsive, event-driven architecture.

Container-based deployment strategies using docker in production environments

While serverless is powerful for granular, event-driven tasks, many industrial applications—such as MES systems, SCADA extensions, or advanced analytics dashboards—are better suited to container-based deployment. Docker containers provide a consistent runtime environment that packages application code, dependencies, and configuration into a portable unit. This consistency is crucial in industrial operations, where applications often need to move seamlessly between on-premises edge gateways, private clouds, and public cloud regions.

In production environments, you can standardize Docker images for core manufacturing services, such as data collectors, protocol translators, or AI inference engines. These images are stored in private registries like Amazon ECR, Azure Container Registry, or Harbor and deployed through CI/CD pipelines. This approach reduces configuration drift and makes it far easier to roll out updates across multiple plants, lines, or regions without disrupting critical operations.

Container-based deployment also supports blue-green and canary release strategies that minimize downtime and risk. For example, you might route a small percentage of machine data to a new version of a quality inspection microservice running in a separate container deployment. If performance metrics confirm that defect detection accuracy improves, you can gradually shift more traffic and eventually retire the old version—much like gradually switching over a conveyor line to a new process while keeping the old one as backup.

Real-time data processing with Edge-Cloud hybrid models

As industrial systems become more connected, organizations are realizing that not all workloads belong exclusively in the cloud or at the edge. Edge-cloud hybrid models combine on-premises processing close to the machines with cloud-based analytics, storage, and orchestration. This architecture is essential for scalable industrial operations where latency, bandwidth, and regulatory requirements vary across sites and use cases.

Think of the edge as the real-time nervous system and the cloud as the long-term memory and intelligence center. Time-critical decisions—such as shutting down a machine to prevent damage—are handled locally, while trend analysis, optimization algorithms, and fleet-wide benchmarking are executed in the cloud. This division of responsibilities helps you balance performance, cost, and resilience while still benefiting from the scalability of cloud computing.

AWS IoT greengrass and azure IoT edge for latency-sensitive operations

AWS IoT Greengrass and Azure IoT Edge are purpose-built platforms that extend cloud capabilities to on-premises devices and gateways. They allow you to deploy and run containerized workloads, machine learning models, and rules engines directly on edge hardware, synchronizing with the cloud when connectivity is available. For latency-sensitive operations—like real-time control loops, safety interlocks, or high-speed vision inspection—this edge execution is indispensable.

With these platforms, you can define deployment manifests that specify which modules or Lambda functions run at each plant or production line. For example, a Greengrass core device might run a local rules engine for threshold alerts, buffer sensor data in case of network outages, and periodically batch-upload compressed datasets to the cloud for deeper analysis. Azure IoT Edge similarly enables modules for protocol translation, OPC UA integration, and local stream processing to run alongside AI models on industrial PCs.

By managing these edge workloads centrally from the cloud, you maintain consistency and visibility across your entire industrial footprint. Updates can be rolled out incrementally, logs and metrics are collected for compliance and troubleshooting, and you retain the ability to fall back to safe local operation if the WAN link fails. This combination of autonomy and centralized governance is a key reason edge-cloud hybrids are becoming a standard pattern in smart factories.

Stream processing frameworks: apache kafka and google cloud Pub/Sub integration

Industrial systems increasingly rely on stream processing frameworks to handle continuous flows of telemetry, events, and logs. Apache Kafka has become a reference technology for building scalable, fault-tolerant data pipelines in manufacturing, while Google Cloud Pub/Sub offers a fully managed alternative in the Google ecosystem. These platforms enable you to ingest, buffer, and route millions of messages per second from IoT gateways, MES systems, ERP platforms, and external partners.

In a typical architecture, factories publish events—such as machine states, quality checks, and logistics updates—into Kafka topics. Downstream services subscribe to these topics to drive dashboards, trigger alerts, or feed analytics and machine learning models. Because Kafka decouples producers from consumers, you can add new applications, such as a predictive maintenance service or a digital twin dashboard, without modifying the existing equipment integration.

Managed offerings like Confluent Cloud, Amazon MSK, and Google Cloud Pub/Sub reduce the operational overhead of running distributed messaging clusters. They provide built-in scaling, encryption, and observability tooling, allowing you to focus on business logic rather than cluster maintenance. For scalable industrial operations, this means you can reliably stream data from dozens of plants into centralized analytics platforms, enabling near real-time decision-making across your supply chain.

5g-enabled edge computing nodes in smart factory implementations

The emergence of private 5G networks is transforming how factories connect machines, robots, and mobile assets. 5G-enabled edge computing nodes—often compact servers or gateways placed on the shop floor—leverage ultra-low latency and high bandwidth to run demanding workloads such as real-time robotics coordination, AR-assisted maintenance, or high-resolution video analytics. These nodes act as mini data centers, bringing cloud-like processing power into the production environment.

By combining 5G with edge computing, manufacturers can replace or augment wired fieldbuses with flexible, software-defined networks. This is particularly valuable in dynamic environments like automotive assembly lines or warehouses with autonomous mobile robots, where fixed cabling can become a bottleneck. Workloads can be dynamically offloaded between edge nodes and the central cloud depending on performance requirements and network conditions, ensuring consistent quality of service.

From a scalability standpoint, 5G-enabled edge nodes allow you to standardize an architecture template and replicate it across sites. Each plant can deploy a set of edge servers with pre-configured containers for vision, quality, and safety functions, all managed by a central orchestration plane. As your operations expand, adding a new facility becomes more like deploying a new region in the cloud than building a bespoke IT system from scratch.

Time-series database solutions: InfluxDB and TimescaleDB for sensor data

Sensor data in industrial operations is inherently time-series in nature: temperatures, pressures, speeds, and vibrations all change over time and must be tracked at high resolution. General-purpose relational databases are not optimized for this pattern, which is why specialized time-series databases like InfluxDB and TimescaleDB have gained traction in industrial analytics. These systems provide high-ingest rates, efficient compression, and powerful query capabilities tailored for time-based data.

InfluxDB offers a purpose-built time-series engine with a simple line protocol and a flexible retention policy mechanism, making it well suited for collecting high-frequency telemetry at the edge or in the cloud. TimescaleDB, implemented as an extension to PostgreSQL, combines SQL familiarity with optimizations for large time-series datasets, which can be very appealing if your teams already rely heavily on relational databases. Both options support downsampling, rollups, and continuous queries that help you retain granular data where needed while summarizing older data for long-term trend analysis.

In a scalable industrial architecture, time-series databases often sit behind message brokers and ingestion layers, storing normalized streams of sensor data for use by dashboards, AI models, and reporting tools. By choosing managed offerings—such as InfluxDB Cloud or Timescale Cloud—you can offload operational tasks like backups, clustering, and patching, ensuring that as data volumes grow into billions of points, your storage and query performance keep pace without major re-engineering.

Enterprise resource planning migration to SaaS platforms

Cloud computing is not only transforming shop floor systems; it is also reshaping core business platforms like Enterprise Resource Planning (ERP). Moving ERP to Software as a Service (SaaS) models enables industrial companies to standardize processes across regions, reduce upgrade complexity, and align IT spending with usage. For organizations seeking scalable industrial operations, SaaS ERP becomes the backbone that connects finance, procurement, production, and logistics into a single, cloud-native environment.

Legacy on-premises ERP deployments often suffer from heavy customization, version fragmentation, and long release cycles. By contrast, cloud ERP platforms deliver regular feature updates, embedded analytics, and native integration with other cloud services. This shift allows you to redirect scarce IT resources from maintaining legacy systems to driving transformation initiatives—such as advanced planning, integrated business planning, or real-time cost tracking.

SAP S/4HANA cloud versus on-premises deployment models

SAP S/4HANA is a flagship example of how ERP can be deployed on-premises or as a multi-tenant SaaS solution. On-premises or single-tenant hosted deployments provide maximum control and customization, which can be important for highly specialized industries or strict regulatory environments. However, they also carry the burden of infrastructure management, upgrades, and capacity planning—challenges that become more acute as your operations expand globally.

SAP S/4HANA Cloud, on the other hand, offers a standardized, best-practice-based model with rapid implementation templates for manufacturing, supply chain, and asset management. Because it is delivered as SaaS, performance scaling, security patching, and high availability are managed by the provider. This can significantly reduce downtime and accelerate the adoption of new capabilities such as embedded analytics and integrated business planning.

When comparing these deployment models, many industrial organizations adopt a pragmatic approach: highly regulated plants or unique processes may remain on specialized, controlled environments, while more standard operations move to S/4HANA Cloud. Over time, as cloud governance and compliance mature, the balance often shifts toward SaaS, especially when executives see the benefits in agility and total cost of ownership.

Oracle NetSuite and microsoft dynamics 365 cloud ERP capabilities

Beyond SAP, Oracle NetSuite and Microsoft Dynamics 365 have emerged as leading cloud ERP platforms, especially for mid-market and rapidly growing industrial firms. NetSuite offers a unified suite that covers financials, order management, inventory, and light manufacturing, making it attractive for companies seeking a streamlined, globally accessible system. Its multi-subsidiary capabilities help organizations manage complex corporate structures, multiple currencies, and global tax requirements from a single cloud instance.

Microsoft Dynamics 365 blends ERP and CRM capabilities, with modules for finance, supply chain management, field service, and customer engagement. For industrial organizations heavily invested in the Microsoft ecosystem—using Azure, Power BI, and Microsoft 365—Dynamics 365 can create a highly integrated environment where operational and customer data flow seamlessly. This integration is particularly powerful for scenarios like connected field service, where IoT alerts trigger work orders, technician scheduling, and billing workflows automatically.

Both platforms support extensibility through low-code tools and APIs, allowing you to tailor processes without heavy custom coding. As your industrial operations scale, this flexibility helps you localize workflows for specific plants or regions while still maintaining a standardized global template—a crucial balance between control and adaptability.

Data sovereignty compliance in multi-regional cloud ERP architectures

As soon as ERP moves into the cloud, questions about data sovereignty and regulatory compliance come to the forefront. Industrial companies operating across Europe, North America, and Asia must contend with regulations such as GDPR, regional data residency laws, and industry-specific standards. Multi-regional cloud ERP architectures need to ensure that sensitive data—such as employee records, financial details, or controlled technical information—remains within approved jurisdictions.

Modern SaaS ERP providers address these concerns by offering data centers in multiple regions and configurable data residency options. However, it remains your responsibility to define policies on where different data types may be stored, how cross-border data transfers are handled, and what encryption and access controls are enforced. You may choose, for example, to keep personal data in-region while allowing anonymized operational metrics to be replicated globally for consolidated reporting.

Designing compliant multi-regional architectures often involves more than just ERP. Identity management, integration platforms, and reporting tools must all align with data residency requirements. By involving legal, compliance, and security teams early in your cloud ERP strategy, you can avoid costly rework later and ensure that your move to scalable, cloud-based operations does not introduce regulatory risk.

Predictive maintenance through Cloud-Based machine learning pipelines

Predictive maintenance has become one of the most compelling use cases for cloud computing in industrial operations. By combining sensor data, maintenance logs, and contextual information such as operating conditions, organizations can build machine learning models that anticipate failures before they occur. Cloud-based ML pipelines provide the scalable storage, compute, and tooling required to train, deploy, and continuously improve these models across entire fleets of assets.

Instead of relying solely on fixed maintenance schedules or reactive repairs, predictive strategies help you optimize spare parts inventory, reduce unplanned downtime, and extend asset lifetimes. The cloud acts as the central hub where data from multiple plants and asset types is aggregated, enabling models to learn from a much broader set of patterns than any single facility could provide. As a result, the accuracy and robustness of predictive insights tend to improve over time.

Tensorflow and PyTorch model training on google cloud AI platform

Google Cloud AI Platform (and its newer Vertex AI offerings) is designed to support end-to-end machine learning workflows using popular frameworks like TensorFlow and PyTorch. For predictive maintenance, data scientists can leverage managed Jupyter notebooks, scalable training clusters, and specialized hardware such as GPUs and TPUs to experiment with deep learning models on large historical datasets. This is particularly useful when dealing with high-frequency vibration data or multivariate time-series from complex equipment.

Because training jobs can be distributed across many nodes, you can iterate quickly on model architectures—testing different neural network structures, feature engineering strategies, and hyperparameters. The platform’s managed services handle infrastructure provisioning, logging, and experiment tracking, which saves valuable time and reduces operational complexity. Once a satisfactory model is identified, it can be packaged and deployed as a managed online prediction service or batch scoring job.

For industrial teams, the combination of TensorFlow or PyTorch with Google Cloud means you do not need to build and maintain your own GPU clusters or ML toolchains. You can scale up training resources for intensive experiments and scale them down afterward, paying only for what you use. This elasticity is crucial when predictive maintenance projects move from pilot to full-scale deployment across thousands of machines.

Amazon SageMaker for anomaly detection in industrial equipment

Amazon SageMaker offers a similarly comprehensive environment for building, training, and deploying machine learning models, with specific strengths in operationalization and integration with the broader AWS ecosystem. For industrial anomaly detection, you can use built-in algorithms such as Random Cut Forest or bring your own models developed in frameworks like XGBoost, TensorFlow, or PyTorch. SageMaker handles data ingestion from S3, feature processing, training, and deployment into scalable inference endpoints.

One practical pattern is to stream sensor data from AWS IoT Core into Kinesis or Kafka, then periodically aggregate and store it in S3 for model training. SageMaker can then be scheduled to retrain models as new data accumulates, ensuring that anomaly detectors remain accurate as equipment ages or operating conditions change. This closes the loop between data generation and continuous model refinement, a key requirement for robust predictive maintenance.

Because SageMaker integrates with AWS Identity and Access Management (IAM), CloudWatch, and CloudTrail, it also supports the governance and observability demands of industrial environments. You can monitor model performance, track inference latency, and audit who deployed which models where—critical capabilities when ML-driven recommendations start influencing high-value operational decisions.

Digital twin simulation using NVIDIA omniverse and azure digital twins

Digital twin technology takes predictive maintenance a step further by creating virtual representations of physical assets, processes, or even entire plants. Platforms like NVIDIA Omniverse and Azure Digital Twins allow you to simulate and visualize complex systems in 3D, feeding them with real-time data from sensors and control systems. The result is a living model that reflects current operating conditions and can be used to test scenarios, diagnose issues, and optimize performance.

NVIDIA Omniverse focuses on high-fidelity visualization and simulation, especially valuable for robotics, autonomous systems, and ergonomics studies. Azure Digital Twins provides a semantic modeling environment where you can define relationships between assets, spaces, and processes, integrating with IoT Hub, Time Series Insights, and analytics services. Together, they enable you to answer questions like: What happens to line throughput if we change this conveyor speed? How will temperature variations in one zone affect equipment failure rates?

By anchoring predictive maintenance models within a digital twin, you can move beyond predicting individual component failures to understanding system-level impacts. For example, a predicted failure on a single pump can be translated into expected production loss, energy waste, or maintenance crew workload across the plant. This holistic view helps prioritize interventions and aligns maintenance decisions with business outcomes, not just technical metrics.

Mlops workflows with kubeflow for continuous model deployment

As predictive maintenance initiatives mature, managing the lifecycle of multiple models across many assets becomes a significant challenge. MLOps—the application of DevOps principles to machine learning—addresses this by standardizing how models are built, tested, deployed, and monitored. Kubeflow, an open-source MLOps framework built on Kubernetes, is particularly well suited to industrial environments that already use container orchestration for other workloads.

With Kubeflow, you can define reproducible ML pipelines that encompass data extraction, feature engineering, training, validation, and deployment stages. These pipelines run on Kubernetes clusters either on-premises or in the cloud, giving you consistent tooling across development, staging, and production environments. This consistency reduces the risk of models behaving differently after deployment, a common source of frustration in early predictive maintenance projects.

Continuous deployment of models also becomes more manageable. When a new version of a predictive maintenance model passes acceptance tests, it can be automatically rolled out to a subset of assets, with performance monitored closely. If key metrics—such as false positive rate or lead time before failure—improve, the rollout can be expanded. If not, the system can roll back to the previous model version. This iterative approach aligns well with the experimental nature of machine learning while still honoring the reliability requirements of industrial operations.

Supply chain visibility via cloud integration platforms

Scalable industrial operations depend not only on what happens inside the plant but also on the reliability and transparency of the broader supply chain. Cloud integration platforms—often described as iPaaS (Integration Platform as a Service)—play a crucial role in connecting ERP, MES, logistics providers, suppliers, and customers into a coherent, real-time information fabric. When data flows seamlessly across these systems, you gain end-to-end visibility into orders, inventory, shipments, and risks.

Modern platforms such as MuleSoft, Dell Boomi, and Azure Logic Apps provide prebuilt connectors, transformation tools, and workflow engines that reduce the time and effort required to integrate heterogeneous systems. Instead of managing a brittle web of point-to-point connections, you orchestrate data flows centrally, applying governance, monitoring, and security policies consistently. This architectural shift is analogous to replacing a patchwork of local roads with a well-managed highway network, where traffic is easier to route, monitor, and optimize.

For example, you can integrate supplier portals, transportation management systems, and warehouse management systems into a single cloud-based visibility layer. Planners then access dashboards that display real-time inbound and outbound flows, potential disruptions, and projected stockouts. With this level of transparency, decisions such as expediting shipments, reallocating inventory, or adjusting production schedules become more data-driven and timely, directly supporting scalable, resilient operations.

Cost optimisation strategies for industrial cloud workloads

As cloud usage grows across OT and IT, cost optimization becomes a strategic discipline rather than an afterthought. The very elasticity that makes cloud computing attractive can also lead to unexpected bills if not managed carefully. Industrial organizations therefore need structured approaches—often framed as FinOps practices—to align cloud spending with business value while maintaining performance and reliability.

One foundational strategy is rightsizing: regularly reviewing compute, storage, and database usage to ensure resources match actual demand. Underutilized virtual machines, overprovisioned storage tiers, and idle test environments are common sources of waste. By using cloud-native monitoring tools and cost analyzers, you can identify these inefficiencies and adjust instance types, autoscaling thresholds, or shutdown schedules. Think of it as tuning a production line—you wouldn’t run all machines at full speed regardless of demand, so you shouldn’t leave cloud resources running at peak capacity unnecessarily.

Another powerful tactic is leveraging pricing models such as reserved instances, savings plans, and spot instances for predictable or flexible workloads. Batch analytics, simulation jobs, and non-critical test environments are good candidates for discounted capacity, while 24/7 production systems may benefit from commitments that lock in lower rates. In addition, data lifecycle policies—archiving cold data to cheaper storage tiers and deleting obsolete logs—help control storage costs as telemetry volumes expand.

Finally, establishing cross-functional governance is key. Finance, operations, and IT teams should collaborate to define budgets, chargeback or showback models, and KPIs that link cloud consumption to business outcomes like throughput, uptime, or lead time. When engineers see the cost impact of architectural choices and are empowered with the right tools, they can design solutions that are both scalable and economically sustainable, ensuring that cloud computing remains an enabler—not a constraint—for industrial growth.