Industrial enterprises face an unprecedented challenge in today’s rapidly evolving technological landscape. Legacy manufacturing systems, once reliable workhorses of production, now struggle to meet the demands of modern digital operations. The convergence of operational technology (OT) and information technology (IT) has created both opportunities and complexities that require strategic architectural thinking. Companies that fail to modernise their digital infrastructure risk falling behind competitors who embrace scalable, cloud-native solutions. The stakes have never been higher for industrial organisations seeking to remain competitive whilst managing increasing operational complexity and regulatory requirements.

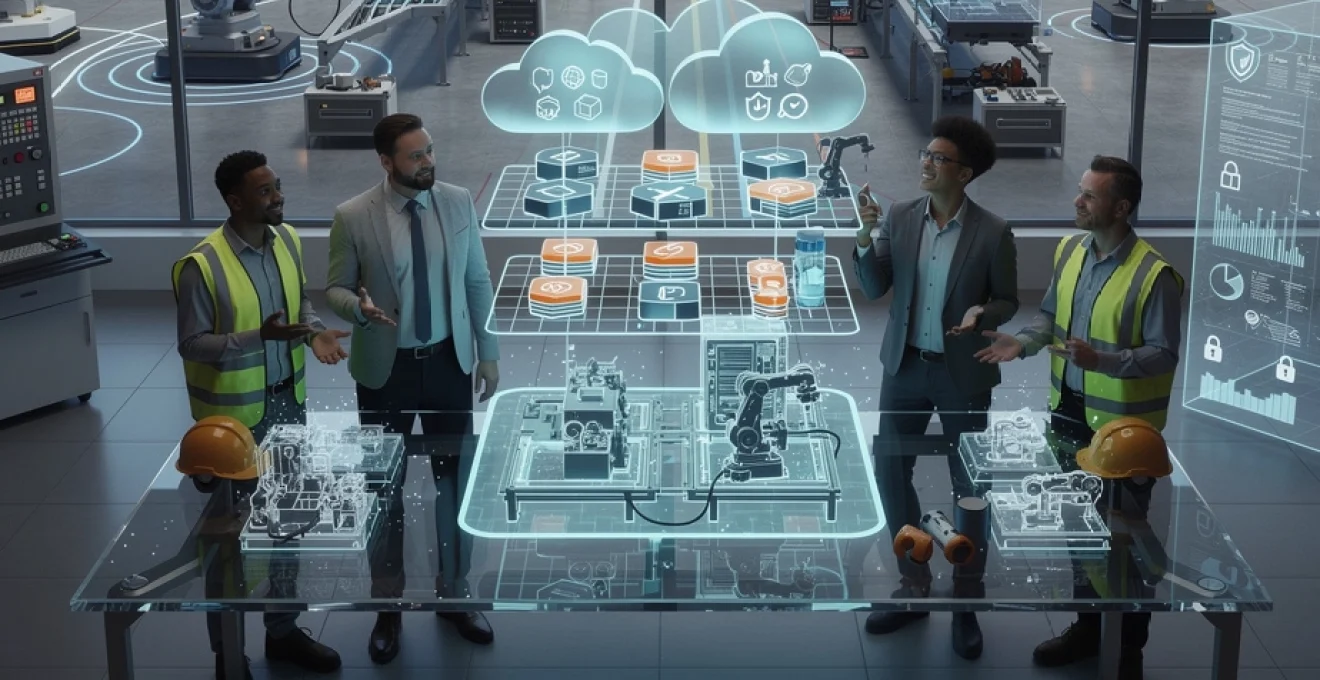

Enterprise digital transformation framework assessment for industrial operations

Digital transformation in industrial environments requires a comprehensive assessment of existing systems, processes, and organisational readiness. Unlike traditional enterprise transformations, industrial operations must contend with safety-critical systems, real-time processing requirements, and decades of accumulated technical debt. The assessment phase establishes the foundation for all subsequent architectural decisions and determines the pace at which transformation can safely proceed.

Modern industrial enterprises generate massive amounts of data, yet much remains locked in isolated systems, limiting visibility and slowing decision-making processes that could drive significant competitive advantages.

A thorough transformation framework assessment evaluates multiple dimensions simultaneously. Technical readiness encompasses existing infrastructure capacity, network architecture, and system interoperability. Organisational readiness examines change management capabilities, skills gaps, and cultural barriers to adoption. Operational readiness focuses on production continuity requirements, maintenance windows, and risk tolerance levels. These assessments reveal the constraints within which architectural decisions must operate and highlight areas requiring immediate attention before broader transformation initiatives can succeed.

Legacy system integration challenges in manufacturing environments

Manufacturing environments typically contain a diverse ecosystem of legacy systems that have evolved over decades of operational refinement. These systems often utilise proprietary protocols, outdated communication standards, and custom interfaces that create significant integration barriers. The challenge becomes particularly acute when attempting to extract real-time data for analytics purposes or integrate with modern cloud-based applications.

Legacy programmable logic controllers (PLCs) and distributed control systems (DCS) frequently lack modern connectivity options, requiring protocol conversion gateways and edge computing solutions. Historical investment in these systems means replacement is often economically unfeasible, necessitating creative integration approaches that preserve existing functionality whilst enabling new capabilities. The complexity multiplies when considering that different production lines may utilise equipment from various vendors, each with distinct communication protocols and data formats.

Successful legacy integration requires a phased approach that minimises operational disruption. This typically involves deploying industrial IoT gateways that can translate between legacy protocols and modern standards, implementing data historians that capture and contextualise information from multiple sources, and establishing secure communication channels that meet both IT security requirements and OT operational needs. The key lies in creating abstraction layers that shield modern applications from the complexity of underlying legacy systems.

SCADA and MES compatibility requirements for digital architecture

Supervisory Control and Data Acquisition (SCADA) systems and Manufacturing Execution Systems (MES) form the nervous system of modern industrial operations. These platforms must seamlessly integrate with new digital architecture whilst maintaining their critical operational functions. Compatibility requirements extend beyond simple data exchange to encompass real-time performance, fault tolerance, and operational continuity during system updates or maintenance activities.

SCADA systems require deterministic communication patterns with guaranteed response times, often measured in milliseconds. Digital architecture must respect these timing constraints whilst providing the flexibility needed for modern analytics and cloud integration. MES compatibility involves more complex data structures and business logic, requiring careful API design and transaction management to ensure data consistency across multiple systems and operational domains.

Industrial IoT device connectivity standards and protocols

The proliferation of Industrial Internet of Things (IIoT) devices has introduced new connectivity challenges that must be addressed through standardised protocols and robust device management frameworks. OPC Unified Architecture (OPC UA) has emerged as a leading standard for industrial communications, providing secure, reliable, and platform-independent data exchange capabilities that bridge traditional OT and modern IT systems.

MQTT (Message Queuing Telemetry Transport) offers lightweight messaging capabilities ideal for resource-constrained devices and unreliable network conditions common in industrial environments. Time-Sensitive Networking (TSN) standards enable deterministic Ethernet communications crucial for real-time control applications. The challenge lies in creating unified architectures that support multiple protocols simultaneously whilst maintaining security, performance, and

manageability at scale. Industrial enterprises increasingly adopt a layered communication model, where field devices communicate via OPC UA or native protocols to edge gateways, which then publish normalised data streams over MQTT into a central industrial data backbone or Unified Namespace. This approach reduces tight coupling between producers and consumers of data and creates a scalable foundation for advanced analytics, AI workloads, and cross-site visibility.

Data governance frameworks for operational technology networks

As OT systems become more connected, data governance for industrial operations can no longer be an afterthought. A robust data governance framework defines how operational data is collected, classified, secured, and used across the enterprise. This is especially critical when sensitive production data, equipment performance metrics, and safety-related information flow beyond the plant floor into enterprise applications and cloud platforms.

Effective governance for OT networks starts with a clear inventory of data assets and data flows, including which systems generate which data, who consumes it, and where it is stored. Industrial enterprises benefit from defining data ownership at the domain level (for example, production, maintenance, quality) and establishing data stewardship roles responsible for data quality, lineage, and lifecycle management. Regulatory requirements such as data retention rules, export controls, and industry-specific safety standards must be embedded into these governance policies from the outset.

To operationalise data governance in OT environments, organisations increasingly deploy data catalogues, classification tools, and policy engines that can span both on‑premises and cloud systems. These capabilities make it easier to control access to high-value production data, enforce encryption and retention rules, and track how data is transformed for analytics and AI applications. When governance is aligned with the digital transformation framework, industrial enterprises gain the confidence to scale their digital architecture without losing control of their most critical operational information.

Cloud infrastructure design patterns for industrial enterprise architecture

Cloud infrastructure has become a cornerstone of scalable digital architecture for industrial enterprises, but wholesale migration of OT workloads to the cloud is rarely feasible or desirable. Instead, leading organisations adopt cloud infrastructure design patterns that balance on‑premises control with cloud scalability, resilience, and innovation. These patterns must accommodate stringent latency requirements, data sovereignty concerns, and the need for continuous plant operations.

A well-architected industrial cloud environment often combines hybrid cloud deployment, edge computing, and multi-cloud strategies, reinforced by containerisation and API-driven integration. The objective is not to replace existing SCADA or MES systems overnight, but to surround and augment them with cloud-native capabilities for analytics, AI, and cross-site coordination. By choosing the right cloud design patterns, industrial enterprises can modernise at their own pace while preserving the reliability that plant operations demand.

Hybrid cloud deployment models using AWS industrial services

Hybrid cloud deployment has emerged as the prevailing pattern for industrial enterprises leveraging AWS industrial services. In this model, latency-sensitive control systems remain on-site, while data processing, storage, and advanced analytics are offloaded to AWS. Services such as AWS IoT SiteWise, AWS IoT Greengrass, and AWS IoT Core enable secure ingestion, modelling, and analysis of equipment data without disrupting existing OT systems.

A typical hybrid architecture for manufacturing plants uses edge gateways running AWS IoT Greengrass to collect data from PLCs, DCS, and sensors, perform local preprocessing, and securely transmit selected data to AWS for long-term storage and analytics. Critical KPIs and alerts can be computed both at the edge and in the cloud, ensuring that operations are not dependent on constant connectivity. For compliance-conscious industries, data residency configurations and VPC-based architectures help satisfy regulatory requirements while still reaping the benefits of cloud scalability.

The key to successful hybrid deployment is a clear segmentation of workloads: what must remain on-premises due to latency or safety constraints, what can be mirrored in the cloud for resilience, and what can be fully offloaded. By starting with targeted use cases—such as predictive maintenance or energy optimisation—industrial enterprises can validate their hybrid model, refine security and connectivity patterns, and then extend the architecture to additional production sites and business functions.

Edge computing implementation with microsoft azure IoT edge

Edge computing has become essential for industrial enterprises seeking to process data close to where it is generated while still integrating with cloud-based digital platforms. Microsoft Azure IoT Edge provides a powerful framework for deploying containerised workloads to industrial gateways and embedded devices, enabling analytics, AI models, and protocol translation to run at the edge. This reduces latency, preserves bandwidth, and improves resilience when connectivity to the cloud is intermittent.

An effective Azure IoT Edge implementation in manufacturing typically includes modules for OPC UA ingestion, local data filtering, and anomaly detection models deployed from Azure Machine Learning. These modules operate within a secure runtime that can be centrally managed from Azure, allowing you to roll out new capabilities across multiple plants without physical intervention. When connectivity is restored after an outage, buffered data synchronises automatically, ensuring consistent historical records in the central data lake or time-series store.

Industrial organisations should design their edge architecture with clear separation between control logic and analytical workloads. Safety-critical control remains in PLCs or dedicated controllers, while Azure IoT Edge focuses on non-critical decision support, monitoring, and optimisation. This separation aligns with defence-in-depth security principles and ensures that edge innovation does not compromise the stability of core production systems.

Multi-cloud strategy development for mission-critical applications

Relying on a single cloud provider for mission-critical industrial applications can introduce concentration risk, particularly when operations span multiple regions and regulatory jurisdictions. A multi-cloud strategy allows industrial enterprises to leverage best-of-breed services from different providers while maintaining resilience against outages, vendor lock-in, and geopolitical risks. The challenge lies in designing a digital architecture that remains manageable and coherent across clouds.

In practice, multi-cloud for industrial environments often means standardising on platform-agnostic building blocks—such as Kubernetes, MQTT-based data backbones, and open API standards—so that workloads can be deployed or migrated across AWS, Azure, and other providers with minimal rework. Data replication strategies must also be defined, specifying which operational datasets are synchronised between clouds and how consistency is maintained. For highly regulated industries, data residency policies may dictate that certain workloads run in regional clouds while central analytics are consolidated elsewhere.

A pragmatic multi-cloud approach starts with clear business drivers: is the goal higher availability, regulatory compliance, negotiating leverage, or local presence? From there, architects can define which capabilities are duplicated across clouds and which remain provider-specific. Governance, observability, and security controls must be designed to operate across this heterogeneous landscape, ensuring that teams have a unified view of system health and risk no matter where workloads run.

Container orchestration with kubernetes in industrial environments

Containerisation and Kubernetes have transformed how modern applications are deployed and scaled, and industrial enterprises are increasingly adopting these technologies to modernise their digital architecture. Kubernetes provides a consistent orchestration layer that can run in on‑premises data centres, at the edge, or in any major cloud, making it a natural fit for hybrid and multi-cloud industrial strategies. When applied correctly, Kubernetes enables faster deployment cycles, improved resource utilisation, and more resilient industrial applications.

In industrial contexts, Kubernetes is often used to host microservices that support production operations, such as equipment monitoring dashboards, quality analytics, and scheduling optimisation engines. These services consume data from IIoT platforms and publish insights back into MES or ERP systems. Because Kubernetes abstracts underlying infrastructure, teams can standardise deployment pipelines and security policies across plants and cloud regions, reducing operational complexity as the digital landscape grows.

However, deploying Kubernetes in industrial environments requires careful planning around network connectivity, real-time constraints, and security segregation from core control systems. Production clusters may be logically or physically separated from OT networks, with controlled cross-domain gateways handling data exchange. By treating Kubernetes as a strategic platform rather than a tactical tool, industrial enterprises can create a flexible foundation for future AI and analytics workloads without compromising the stability of existing systems.

API gateway configuration for industrial data exchange

APIs are the connective tissue of modern digital architecture, and industrial enterprises rely on them to expose operational data to business systems, partners, and customer-facing applications. An API gateway acts as the central control point for this data exchange, providing routing, security, rate limiting, and observability for all API traffic. Properly configured, an API gateway transforms fragmented interfaces into a coherent, manageable integration layer.

For industrial use cases, API gateways sit between IIoT platforms, data lakes, MES systems, and external consumers such as supply chain partners or equipment OEMs. They enforce authentication and authorisation policies, inspect traffic for anomalies, and translate between internal data models and external contracts. This not only improves security but also reduces the integration burden when onboarding new consumers or exposing new data products, such as equipment performance APIs or real-time production status feeds.

When designing API gateway configurations, industrial architects should define clear API domains aligned with business capabilities: production, maintenance, quality, logistics, and so on. Each domain can have its own rate limits, access policies, and monitoring dashboards. By combining API gateways with standardised naming conventions and versioning strategies, you create an industrial data exchange layer that scales with growing demand while preserving backward compatibility and governance.

Cybersecurity architecture framework for critical infrastructure protection

As industrial enterprises connect more assets and adopt cloud-native technologies, the attack surface of critical infrastructure expands dramatically. Cybersecurity architecture is therefore a foundational component of any scalable digital architecture for industrial enterprises. Rather than treating OT security as an isolated discipline, leading organisations are adopting integrated frameworks that span IT and OT, align with standards such as IEC 62443, and embed security into every layer of the architecture.

A robust cybersecurity framework for industrial operations combines zero trust principles, strong identity and access management, network segmentation, and continuous monitoring. The objective is to assume that any network can be compromised and to design systems so that a breach in one area does not cascade into plant-wide disruption. By building security into architectural decisions from the outset, industrial enterprises can innovate with confidence, even in highly regulated or safety-critical environments.

Zero trust network implementation in OT environments

Zero trust security challenges the legacy assumption that everything inside the plant network is inherently trustworthy. In OT environments, this requires a shift away from flat networks and shared credentials towards fine-grained access controls, continuous verification, and least-privilege principles. Implementing zero trust in industrial settings is complex, but it is increasingly necessary to withstand sophisticated ransomware attacks and targeted threats against critical infrastructure.

A practical zero trust model for OT starts by identifying critical assets—such as SCADA servers, PLC networks, and safety systems—and surrounding them with strong access controls and continuous monitoring. Every connection is authenticated and authorised, whether it originates from the corporate network, remote maintenance vendors, or local engineering workstations. Micro-segmentation and secure gateways ensure that even if one segment is compromised, lateral movement is limited and high-value assets remain protected.

Because many industrial systems were not designed with modern security in mind, zero trust implementation often relies on compensating controls, such as jump hosts, secure remote access platforms, and protocol-aware firewalls. Over time, as equipment is upgraded, native support for certificate-based authentication and encrypted communications can be introduced. The end goal is an OT network where trust is earned, continuously verified, and never assumed.

IEC 62443 compliance standards for industrial control systems

IEC 62443 has become the de facto international standard for securing industrial automation and control systems. It provides a comprehensive framework covering everything from organisational processes and risk assessment to technical requirements for devices, systems, and zones. For industrial enterprises building scalable digital architectures, aligning with IEC 62443 offers a structured path to improving security maturity and demonstrating compliance to regulators and customers.

The standard introduces key concepts such as security levels, zones and conduits, and defence-in-depth strategies that map well to modern digital architectures. By classifying systems into zones based on their criticality and connectivity, and defining secure conduits between them, organisations can design network and access controls that reflect real-world risk. This zoning model also guides segmentation strategies in hybrid and multi-cloud environments, where industrial workloads span on‑premises and cloud infrastructure.

Achieving IEC 62443 compliance is not a one-time project but an ongoing programme involving engineering, operations, and cybersecurity teams. It requires incorporating security requirements into procurement specifications, validating vendor solutions, and maintaining documentation of security controls and incident response procedures. When integrated into the broader enterprise architecture function, IEC 62443 becomes a powerful tool for aligning security investments with business priorities and regulatory obligations.

Network segmentation strategies for SCADA systems

Network segmentation is one of the most effective techniques for reducing cyber risk in SCADA environments. Many historical incidents have demonstrated how flat, unsegmented networks allow attackers to move from a compromised workstation to critical control systems with minimal resistance. A well-designed segmentation strategy creates multiple layers of defence, ensuring that any intrusion is contained and monitored.

For industrial enterprises, segmentation typically begins by separating corporate IT networks from OT networks using firewalls and demilitarised zones (DMZs). Within the OT environment, further segmentation isolates SCADA servers, engineering workstations, historian systems, and field devices into distinct zones. Strict access control lists, application-layer gateways, and one-way data diodes may be used to control traffic between these zones, especially where safety or regulatory requirements are stringent.

Modern segmentation strategies also take into account remote access, cloud connectivity, and IIoT devices. Rather than opening broad VPN access to plant networks, organisations increasingly rely on secure remote access platforms that broker connections on a per-session basis, with multi-factor authentication and detailed logging. By combining physical and logical segmentation techniques, industrial enterprises can significantly reduce the blast radius of any potential attack while still supporting the connectivity required for digital transformation.

Identity and access management for industrial applications

Identity and access management (IAM) is a cornerstone of zero trust architecture and a critical enabler of secure digital operations in industrial enterprises. Historically, many OT environments relied on shared accounts, local credentials on devices, and ad hoc access controls. As systems become more interconnected and remote access more common, this approach is no longer sustainable. Centralised, policy-driven IAM is essential to manage who can access which industrial applications and under what conditions.

Modern IAM for industrial operations integrates OT systems with enterprise identity providers, enabling role-based access control, single sign-on, and multi-factor authentication across both IT and OT domains. Engineers, operators, and third-party vendors receive identities that reflect their responsibilities, and their access can be automatically adjusted based on factors such as location, time, and device trust level. This not only strengthens security but also simplifies audits and compliance reporting by providing a single source of truth for access records.

Implementing IAM in industrial environments often requires bridging legacy systems that lack native support for modern protocols such as SAML or OAuth. In these cases, identity-aware proxies or gateway solutions can act as intermediaries, enforcing central policies while presenting compatible interfaces to older systems. Over time, as applications are modernised or replaced, native IAM integration should become a standard requirement, ensuring that security scales alongside digital innovation.

Data analytics platform architecture for industrial intelligence

Industrial enterprises increasingly view data as a strategic asset, but realising its value requires a well-architected data analytics platform tailored to operational realities. This platform must ingest high-frequency sensor data, contextualise it with production and asset information, and deliver actionable insights to both humans and machines. At the same time, it must support AI and machine learning workloads that can predict failures, optimise energy use, and recommend process improvements.

A scalable analytics architecture for industrial intelligence typically combines an IIoT data streaming backbone, a unified namespace or semantic layer, and cloud-based data lakes and warehouses optimised for time-series and event data. Edge analytics handle immediate decisions close to the process, while central platforms perform deeper historical analysis, model training, and cross-site benchmarking. By designing clear data flows and responsibilities across edge, plant, and cloud layers, you avoid the chaos of point-to-point integrations and inconsistent metrics.

To maintain trust in analytics outputs, industrial enterprises must invest in data quality management, metadata catalogues, and governance processes that document how data is transformed and used. Standardising KPIs and calculation logic across plants ensures that comparisons are meaningful and that improvements can be replicated. When combined with intuitive visualisation tools and role-based access to dashboards and reports, the analytics platform becomes a daily decision-making companion rather than a niche specialist tool.

Microservices architecture implementation for industrial applications

Monolithic industrial applications, such as legacy MES or custom-built scheduling tools, often struggle to keep pace with evolving business requirements. Microservices architecture offers an alternative approach, where functionality is decomposed into small, independently deployable services that communicate over well-defined APIs. For industrial enterprises, this pattern can unlock faster feature delivery, improved resilience, and greater flexibility in integrating with diverse equipment and systems.

Implementing microservices in industrial contexts begins with identifying bounded contexts—areas of functionality that can be isolated without disrupting core operations. Examples include work-order management, quality inspection logging, or energy monitoring. Each service is designed around a specific business capability, owns its own data store, and can be scaled independently based on demand. This modularity reduces the risk of change; updating one service does not require redeploying an entire monolithic application.

However, microservices are not a silver bullet. They introduce new challenges in distributed systems management, such as service discovery, observability, and fault tolerance. Industrial enterprises adopting microservices should invest early in supporting platforms—such as Kubernetes, service meshes, and centralised logging and tracing tools—that provide a consistent operational model. When combined with domain-driven design and strong governance, microservices architecture can transform industrial software from a constraint into a strategic enabler of continuous improvement.

Performance optimisation and monitoring strategies for industrial digital platforms

As industrial enterprises digitise more processes and rely on interconnected platforms, performance and reliability become non-negotiable. A scalable digital architecture must therefore be paired with robust performance optimisation and monitoring strategies that provide real-time visibility into system health, quickly pinpoint bottlenecks, and enable proactive remediation before operations are impacted. In many plants, even a few seconds of latency or minutes of downtime can translate directly into lost production and revenue.

Effective monitoring for industrial digital platforms spans multiple layers: network performance between OT and IT domains, application response times, data pipeline throughput, and the health of underlying infrastructure—both on‑premises and in the cloud. Modern observability stacks combine metrics, logs, and distributed traces into a unified view, allowing teams to correlate events and identify root causes more quickly. Threshold-based alerts are often supplemented by anomaly detection algorithms that learn normal patterns and flag subtle deviations that could indicate emerging issues.

Performance optimisation is not a one-time exercise but an ongoing practice. As new plants are connected, analytics models are deployed, and user adoption grows, load profiles change and previously adequate designs may become strained. Regular capacity planning, load testing, and architecture reviews help ensure that headroom is maintained and that scaling strategies—whether horizontal scaling of microservices, auto-scaling of cloud resources, or optimisation of database queries—remain effective. By embedding monitoring and optimisation into the lifecycle of industrial digital platforms, enterprises can turn potential performance risks into opportunities for continuous refinement and competitive advantage.